User-agent: *

Disallow: /

User-agent: Googlebot-Image

Allow: /images/

This robots.txt file tells all search engine crawlers to not crawl any pages on the website. However, it allows Googlebot-Image to crawl the images directory.

Here is a breakdown of the directives in this robots.txt file:

- User-agent: This directive specifies which search engine crawler the directive applies to. In this case, the directive applies to all search engine crawlers, as the asterisk (*) is used as a wildcard.

- Disallow: This directive tells the search engine crawler not to crawl the specified path. In this case, the directive tells the crawler not to crawl any pages on the website.

- Allow: This directive tells the search engine crawler to crawl the specified path. In this case, the directive tells the crawler to crawl the images directory.

Here are some other directives that you can use in your robots.txt file:

- Host: This directive specifies which host the directive applies to.

- Crawl-delay: This directive specifies how long the search engine crawler should wait between requests to your website.

- Sitemap: This directive specifies the location of your website's sitemap.

For more information on robots.txt files, you can refer to the following resources:

- Robots Exclusion Standard: https://en.wikipedia.org/wiki/Robots_exclusion_standard

- Google Search Console Robots.txt documentation: https://developers.google.com/search/docs/crawling-indexing/robots/intro

- Moz Robots.txt documentation: https://moz.com/learn/seo/robotstxt

Here are the steps on how to cancel the robots.txt file created by Yoast SEO and create a new one:

- Go to your WordPress dashboard and click on Yoast SEO > Tools > File editor.

- In the File editor section, click on the Robots.txt tab.

- Click on the Delete button to delete the existing robots.txt file.

- Click on the Create new file button to create a new robots.txt file.

- In the new robots.txt file, enter the directives that you want to use to control how search engines crawl your website.

- Save the new robots.txt file.

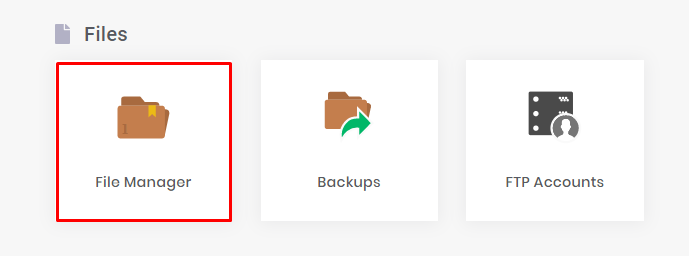

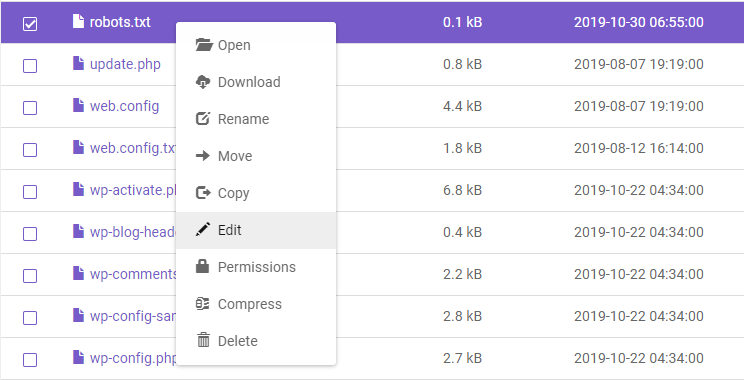

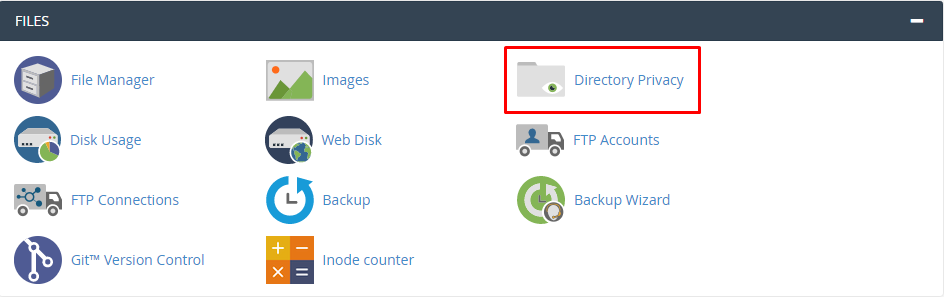

Once you have created the new robots.txt file, you need to upload it to your website's server. You can do this by using a file transfer protocol (FTP) client or by using your web hosting provider's file manager.

Here are some additional things to keep in mind when creating a robots.txt file:

- The robots.txt file must be named robots.txt and it must be saved in the root directory of your website.

- The directives in the robots.txt file are case-sensitive.

- You can use the Allow and Disallow directives to control how search engines crawl your website.

- You can use the User-agent directive to specify which search engines the directives apply to.

I hope this helps! Let me know if you have any other questions.